This post is part of our Q&A series.

A question from graduate students in our Spring 2021 offering of the new course “Targeted Learning in Practice” at UC Berkeley:

Question: Hi Mark,

Statistical analyses of high-throughput sequencing data are often made difficult due to the presence of unmeasured sources of technical and biological variation. Examples of potentially unmeasured technical factors are the time and date when individual samples were prepared for sequencing, as well as which lab personnel performed the experiment.

This post is part of our Q&A series.

A question from graduate students in our Spring 2021 offering of the new course “Targeted Learning in Practice” at UC Berkeley:

Question: Hi Mark,

After the lectures on tmle3shift and tmle3mediate, we’re wondering if a different procedure for mediation analysis could work. Consider a data-generating system for $O = (W, A, Z, Y)$, where $W$ represents three binary covariates, $A$ is a binary exposure of interest, $Z$ are three binary mediators, and $Y$ is a continuous outcome.

This post is part of our Q&A series.

A question from graduate students in our Spring 2021 offering of the new course “Targeted Learning in Practice” at UC Berkeley:

Question: Hi Mark,

I have a question about applying CV-TMLE to a current research project. I have a cross-sectional dataset from Bangladesh, where the outcome of interest is antenatal care use (binary), the exposure of interest is women’s empowerment (continuous), and the baseline covariates include mother’s age, child’s age, mother and father’s education, number of members in household, number of children under 15, household wealth, and maternal depression.

This post is part of our Q&A series.

A question from graduate students in our Spring 2021 offering of the new course “Targeted Learning in Practice” at UC Berkeley:

Question: Hi Mark,

In chapter 6 of your 2018 book Targeted Learning in Data Science, co-authored with Sherri Rose, you discuss the practical necessity of reducing the number of basis functions incorporated into the highly adaptive lasso (HAL) estimator when the number of covariates grows.

This post is part of our Q&A series.

A question from graduate students in our Spring 2021 offering of the new course “Targeted Learning in Practice” at UC Berkeley:

Question: Hi Mark,

A pressing issue in the field of global public health is equitable ownership of data and results in terms of both authorship and representation. In some aspects, targeted learning improves equity by bolstering our ability to efficiently draw causal inferences from global health data.

This post is part of our Q&A series.

A question from graduate students in our Spring 2021 offering of the new course “Targeted Learning in Practice” at UC Berkeley:

Question: Hi Mark,

I was curious in general about approaching problems that involve machine learning-based estimation of densities rather than scalar quantities (i.e., regression), particularly for continuous variables. As a grounding example, for continuous treatments in the TMLE framework one needs to estimate $P(A \mid W)$, where $A$ is a continuous random variable.

This post is part of our Q&A series.

A question from graduate students in our Spring 2021 offering of the new course “Targeted Learning in Practice” at UC Berkeley:

Question: Hi Mark,

I have a survival analysis question. I am working with a dataset that is left- and right-truncated. I am interested in estimating the treatment-specific multivariate survival function of a time-to-event variable. For example, a study where subjects have been randomized to two different treatment groups with baseline covariates $W$, but we only observe the outcome – time at death – for a left- and right-truncated window.

This post is part of our Q&A series.

A question from graduate students in our Spring 2021 offering of the new course “Targeted Learning in Practice” at UC Berkeley:

Question: Hi Mark,

I have a question regarding the requirements for asymptotic efficiency of TMLE.

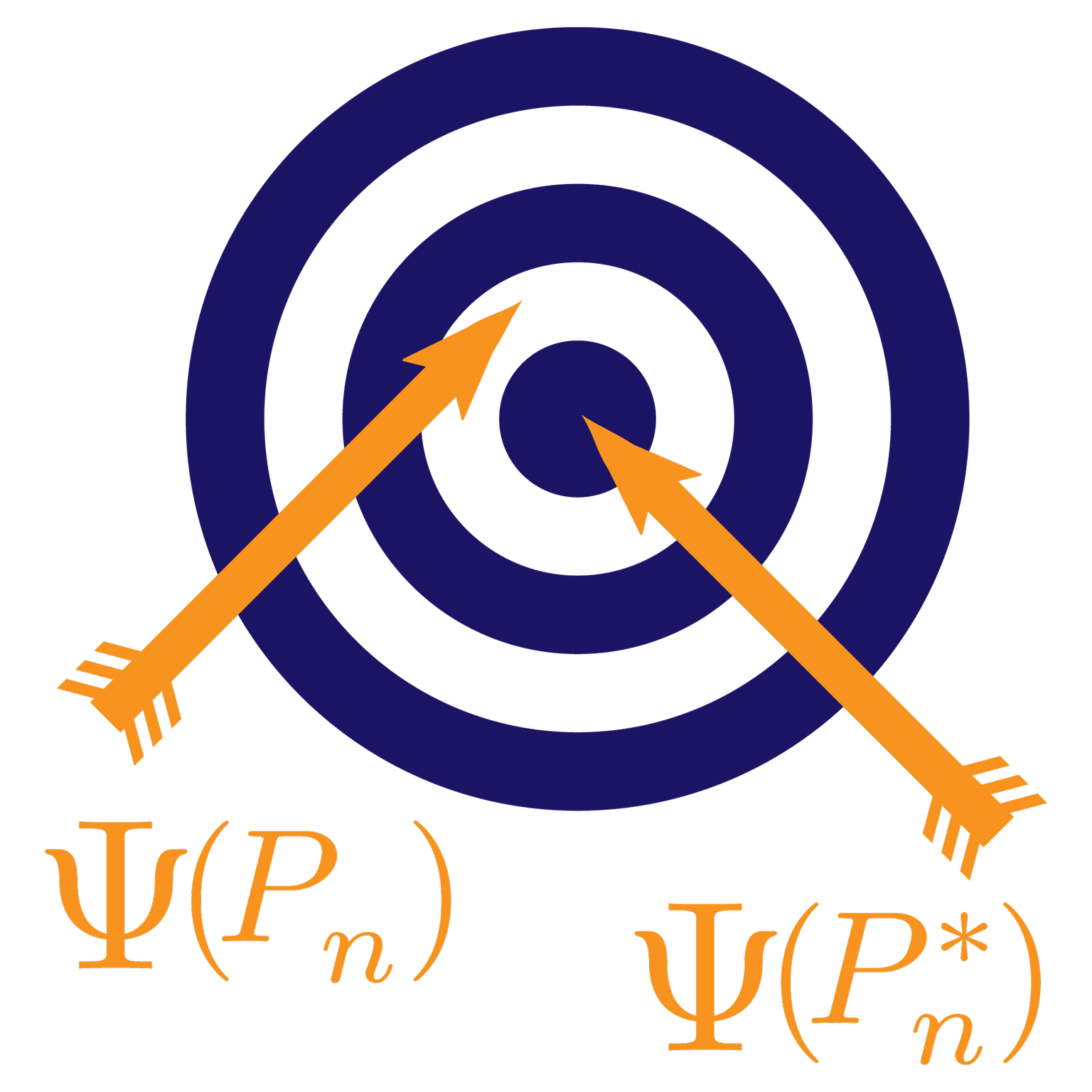

Asymptotic efficiency of TMLE relies on the second-order remainder being negligible. Is this purely a finite-sample concern, or are there potentially parameters of interest where this isn’t true by construction?

This post is part of our Q&A series.

A question from graduate students in our Spring 2021 offering of the new course “Targeted Learning in Practice” at UC Berkeley:

Question: Hi Mark,

As epidemiologists, we wish to study the relationship between time-varying exposure and disease progression over time. A natural choice of study design would be the longitudinal cohort study. In prospective cohorts, participants are not selected from existing data, but enrolled during some enrollment period.

This post is part of our Q&A series.

A question from graduate students in our Spring 2021 offering of the new course “Targeted Learning in Practice” at UC Berkeley:

Question: Hi Mark,

We were discussing practical implementations of stochastic treatment regimes and came up with the following questions we would like to hear your thoughts about.

Question 1 (Practical positivity): Is there a recommended procedure for deciding truncation threshold with respect to shifts in the framework of stochastic treatment regimes?